I recently attended a conference on AI in healthcare, like so many taking place across the country.

The room was electric.Slide after slide promised a revolution: flawless predictions, fewer mistakes, faster diagnoses. Some speakers even floated the idea that, one day, medicine might not need human clinicians in the same way.

People were leaning forward in their seats. Nodding. Smiling. And I felt my stomach tighten.

It wasn’t that I oppose innovation. I’ve spent years studying healthcare systems. I know how slow change happens and the vested interests in the current way of doing things

But as the applause filled the room, a different image came to mind.

My family doctor.

He’s not a Nobel laureate.

He doesn’t have the most advanced systems.

He probably doesn’t appear on innovation panels.But when my results are uncertain, he looks at me – not just at a screen.

When something is complex, he explains.

When a decision has to be made, he owns it.And I realized that what I trust isn’t perfection.

It’s responsibility.And I wondered: When AI helps decide my care, who carries that responsibility? And if something goes wrong, who carries the weight of that decision?

Artificial intelligence (AI) is steadily making its way into the practice of medicine. Not with robots wearing white coats and stethoscopes, but through software embedded in hospital systems. Its uses today range from administrative support to clinical assistance, and in some cases, patient-facing tools. Adoption is still uneven and often experimental, but the footprint is growing.

In many hospitals today, AI is used behind the scenes to support operational tasks: drafting clinical notes, organizing medical records, prioritizing imaging studies, or generating risk alerts for conditions such as sepsis. Some health systems are also piloting patient-facing tools – AI-powered symptom triage, chat-based information assistants, agentic scheduling, more. A recent overview of 2025 hospital AI trends highlights just how rapidly these operational and clinical use cases are expanding. AI may not be visible in every exam room – but it is increasingly embedded in how care is delivered.

Recent data published in JAMA Network Open shows that adoption remains uneven, even as interest accelerates. Medical providers have varying levels of budget, expertise, and appetite for adopting new technologies and AI is not immune to those drivers. For now, these systems are typically framed as powerful assistants rather than independent decision-makers. Final treatment decisions, at least formally, remain in the hands of human clinicians.

When AI moves from the background to the bedside

Now that patients are being exposed to concrete AI tools is this exposure increasing trust… or eroding it? Healthcare isn’t only about getting the technically correct answer. It’s about how that answer reaches us: who explains it, who stands behind it, and whether we feel seen and heard in the process. Healthcare has always required trust. Not just in knowledge, but in people. We trust that someone is accountable. That someone can answer questions. That someone carries responsibility if things go wrong. So, as AI becomes woven into care, the deeper question isn’t whether the system is accurate. It’s whether we still know who – or what – we are trusting.

Medicine has always evolved and trust has always mattered

Medicine has always evolved through innovation: new tools, new treatments, new knowledge. But for most of its history, medical decisions clearly belonged to a person. A doctor listened, interpreted symptoms, made a choice, and stood behind it. As sociologists of medicine have long argued, trust is rooted in professional accountability, not technical precision.

AI changes that structure. When algorithms enter the decision process, judgment is no longer held by one person alone. It becomes shared – between physicians, healthcare systems, and software. But patients don’t experience innovation as performance metrics. When a navigation app makes a mistake, it’s inconvenient. When a streaming algorithm misjudges your taste, it’s annoying. But when AI influences a medical decision, the stakes are different. Vulnerability is involved. The concern isn’t technology itself, it’s accountability. If something goes wrong, who answers for it?

A year ago, nearly two-thirds of U.S. patients reported low trust in their healthcare system to use AI responsibly, and more than half questioned whether harm could be prevented. And for patients who have already experienced bias or dismissal, that question carries even more weight. Research shows that individuals who have experienced discrimination in healthcare report significantly lower trust in their system’s ability to use AI responsibly or prevent harm.

Patients aren’t anti-AI and why that matters for healthcare

Research shows a consistent pattern: people are comfortable when AI reduces paperwork or improves efficiency, but grow cautious when it influences diagnosis or treatment. Patients aren’t rejecting technology. In fact, surveys suggest trust in AI increases when patients already trust their clinicians. AI will improve their relationship with their doctor – and that those with higher trust in their physician were far more likely to see AI as beneficial.

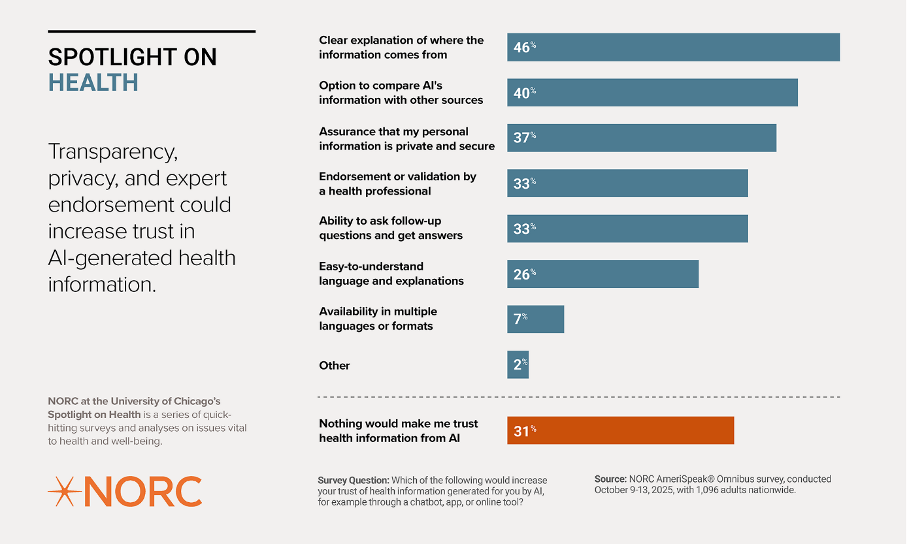

Trust in AI-generated health information increases when three things are visible: transparency, strong privacy protections, and endorsement by trusted experts. People want to know how a system works, how their data is used, and whether real clinicians stand behind the information. National survey data show this pattern clearly: many Americans remain skeptical of AI in healthcare unless they see these trust cues in place.

Healthcare leaders often emphasize performance: accuracy, efficiency, outcomes. Patients focus on something else: whether someone is clearly in charge. Automated triage systems or predictive risk scores that shape care pathways without clear explanation can create that perception. Even signaling heavy AI use can reduce trust. Recent reporting also highlights why this matters: a recent investigation warns that chatbot misinformation – from incorrect symptom guidance to inappropriate care recommendations – could pose significant patient harm if relied on without clear human oversight.

The pattern is clear: patients embrace AI that strengthens care – and hesitate when it obscures accountability.

The future of healthcare: the deep questions AI is opening up

Will AI become part of everyday medicine? Almost certainly. But its success won’t hinge on performance alone. Medicine is not just a technical practice. It is a social and moral one. As AI begins to guide aspects of care, the deeper questions aren’t about speed or accuracy. They’re about values. Will data outweigh patient stories? Will risk scores shape what doctors feel obligated to do? What happens when human judgment and algorithmic recommendations diverge?

Trust, responsibility, and visible accountability remain the foundation of care. If AI strengthens that foundation – by supporting clinicians while keeping responsibility clear – it can enhance medicine. If it blurs who owns decisions, even the most accurate system will struggle to earn acceptance.

I left that conference still believing in innovation.

But I left more certain of something else: in medicine, progress matters – and so does knowing who stands behind it.