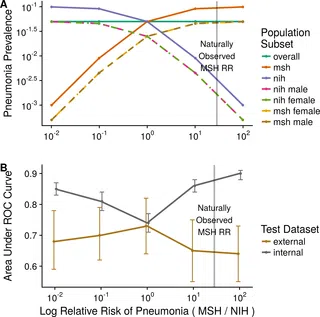

One of the key issues with AI is that algorithms developed in one institution or one set of data may not perform as well when used at different institutions with different data. Researchers at Mount Sinai’s Icahn School of Medicine found that the same deep learning algorithms diagnosing pneumonia in their own chest x-rays did not work as well when applied to images from the National Institutes of Health and the Indiana University Network for Patient Care.

AI models continue to have difficulties in carrying their experiences from one set of circumstances to another. That means companies must commit resources to train new models even for use cases that are similar to previous ones. Transfer learning—in which an AI model is trained to accomplish a certain task and then quickly applies that learning to a similar but distinct activity—is one promising response to this challenge.

Researchers wrote in the journal PLOS: “Early results in using convolutional neural networks (CNNs) on X-rays to diagnose disease have been promising, but it has not yet been shown that models trained on X-rays from one hospital or one group of hospitals will work equally well at different hospitals. Before these tools are used for computer-aided diagnosis in real-world clinical settings, we must verify their ability to generalize across a variety of hospital systems.”

“estimates of CNN performance based on test data from hospital systems used for model training may overstate their likely real-world performance,” the researchers said.

The Mount Sinai findings also highlight the fact that plenty of work remains for AI tech to be ubiquitous in healthcare. It will be important moving forward to Validate an algorithm to ensure, across organizations and geographies, that it’s fit for purpose as well as labeled appropriately as a product and for being described in academic literature.

“A difficulty of using deep learning models in medicine is that they use a massive number of parameters, making it difficult to identify the specific variables driving predictions,” the researchers said. “Even the development of customized deep learning models that are trained, tuned, and tested with the intent of deploying at a single site are not necessarily a solution that can control for potential confounding variables.”

This is all the more reason that systematic debugging, audit, extensive simulation, and validation, along with prospective scrutiny, are required when an AI algorithm is unleashed in clinical practice. It also underscores the need to require more evidence and robust validation to exceed the recent downgrading of FDA regulatory requirements for medical algorithm approval.